- HOME

- VENUE

- RSVP

- REGISTRY

- Blog

- CONTACT

- Master editor botw names

- Binary cross entropy

- Borland c builder 6

- Eminem discography download torrent

- Splinter cell conviction download

- Is pubg crossplay

- Ink sans fight list

- How to rotate video in windows movie maker

- Avatar maker anime online

- What is the default font in editpad lite

- Utc spss code

- All barn finds in forza horizon 4

- Cardcaptor sakura the movie hard dub

- Ironsight hacks 2018

- Mgi photosuite 4 platinum bitturrent

? deeplizard uses music by Kevin MacLeod

BINARY CROSS ENTROPY TRIAL

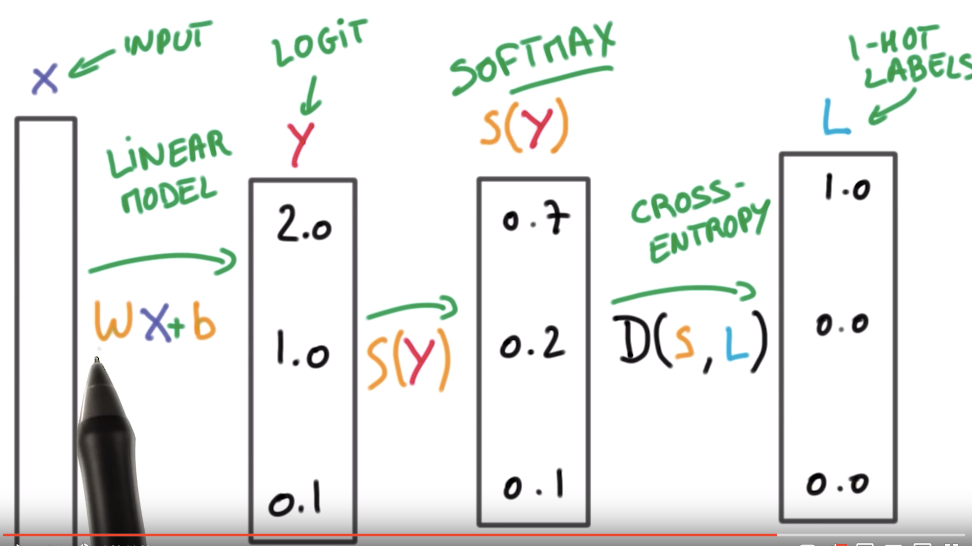

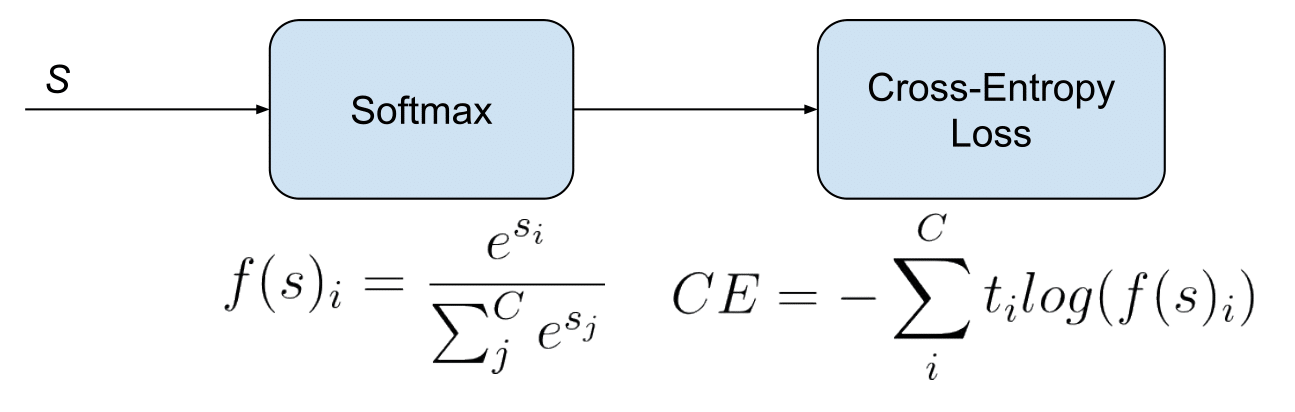

? Get a FREE 30-day Audible trial and 2 FREE audio books using deeplizard's link: ? Check out products deeplizard recommends on Amazon: ❤️? Special thanks to the following polymaths of the deeplizard hivemind: ? Available for members of the deeplizard hivemind: ? Hey, we're Chris and Mandy, the creators of deeplizard! Using probability as a shovel, well dig a little deeper into binary cross-entropy loss (you know, the thing that we optimize to train logistic regression. We'll first go through an intuitive introduction to BCE loss in this episode before covering the math behind it.Ġ0:00 Welcome to DEEPLIZARD - Go to for learning resourcesĠ0:19 Use of categorical cross entropy lossĠ4:44 Collective Intelligence and the DEEPLIZARD HIVEMIND

BINARY CROSS ENTROPY GENERATOR

Wolfram Language & System Documentation Center.We'll now begin exploring how we can measure the performance between the generator and discriminator networks during training via a common loss function called binary cross entropy loss, or BCE loss. Ultralytics have used Binary Cross-Entropy with Logits Loss function from PyTorch for loss calculation of class probability and object score. "CrossEntropyLossLayer." Wolfram Language & System Documentation Center.

For CrossEntropyLossLayer, the input should be a vector of probabilities, note=[Accessed: 19-November-2021.There should be classes floating point values per feature. If you want to provide labels as integers, please use SparseCategoricalCrossentropy loss. We expect labels to be provided in a onehot representation.

BINARY CROSS ENTROPY HOW TO

For CrossEntropyLossLayer, the input and target should be scalar values between 0 and 1, or arrays of these. The following are 30 code examples for showing how to use torch.nn.functional.binarycrossentropywithlogits().These examples are extracted from open source projects. Use this crossentropy loss function when there are two or more label classes.When operating on multidimensional inputs, CrossEntropyLossLayer effectively threads over any extra array dimensions to produce an array of losses and returns the mean of these losses.

For multi-label classification, the idea is the same. Notice how this is the same as binary cross entropy. The probability distribution of P(X) is generated for a. Real array of rank n or integer array of rank n-1 If we formulate Binary Cross Entropy this way, then we can use the general Cross-Entropy loss formula here: Sum(ylog y) for each class. (a) Binary Cross-Entropy 9 Entropy is used to indicate some kind of disorder or uncertainty. It is reliant on Sigmoid activation functions. It is the cross entropy loss when there are only two classes involved.